August 25, 2018

Documentation for Life

As I started writing this post, I got blocked by the dang title. I couldn’t think up one, and so I started writing in the hope that one would come to me.

It’e been a long time since anything was published to this blog. It’s not that I haven’t been writing; if anything my volume of prose has gone up dramatically in the past year as I’ve started pushing for more and more documentation on the teams I work in and the projects I lead.

I think there’s three reasons nothing has been published here in the past year.

For starters, there’s an infinite bikeshed of possibility in running your own blog, powered by software you maintain. See something you want to fix? The bottomless rabbit hole is there, ready and waiting for you to fix it. It becomes nearly impossibly hard to resist the siren song of everything you want to do in code to make the blog better, forgetting of course that the point of the blog is the content, not the chrome.

Secondly, Dunning-Kruger. I’m past the hump of thinking I know anything at all about the subjects I want to talk about, but don’t have the confidence to believe that my observations are valid. This position is, thankfully, changing: I’m starting to get some validation through my work at Patreon and with technical organizations that my experience and opinions are valuable, and worth adding to the collective conversation.

Thirdly, and perhaps most importantly, today especially, is that my my own brain chemistry is acting against my best interests at the moment. There is quite a lot from the last six years of my life that I’m processing, and trying to heal, and covering on a regular basis in therapy. I am out of “fighting for survival” mode, and my brain is taking the break in constant survival stress to raise issues that I need to deal with, and which come with their own flavors of toxic brain chemistry.

So, what do? As I was writing this, and talked above about the fact that my prose output has actually increased, I had the realization that I value documentation to an obsessive degree, and that taking the posts in this blog as attempts at “documenting my life, and the experiences I have in it” might get me around some of those blocks posted above.

We’ll see how it goes, wish me luck.

anxiety

writing

October 22, 2017

A Brief Guide to Locking Down Your Mastdon Account

Mastodon is currently my favorite social network. I love it so much, I started my own server with some friends, and I’m proud to say it’s still going strong. You can read about The Wandering Shop in my previous post about why I started it.

Part of the reason I love Mastodon and The Wandering Shop is that it’s a social community where we get to define the rules, and we get to control who is and isn’t allowed in our neighborhood. Myself and the other shopkeeper, Annalee, do a good job keeping out the riff-raff as per our Code of Conduct. That said, if you aren’t on our server, or if you want a tighter grip over who you share with, Mastodon provides some of the most comprehensive options I’ve seen for privacy in a social network.

So here are 6 things you can do to lock down your Mastodon account.

1. Develop a good relationship with your server admins

While Mastodon provides some excellent options for blocking people and servers just for your account, involving your server admins will help keep bad actors and bad instances off everyone’s feed, and help the neighborhood feel better as a whole. This is tougher on a large server like mastodon.social, but the admins there still try to respond to reports as they can. That “personal relationship” is one reason why I prefer the smaller servers.

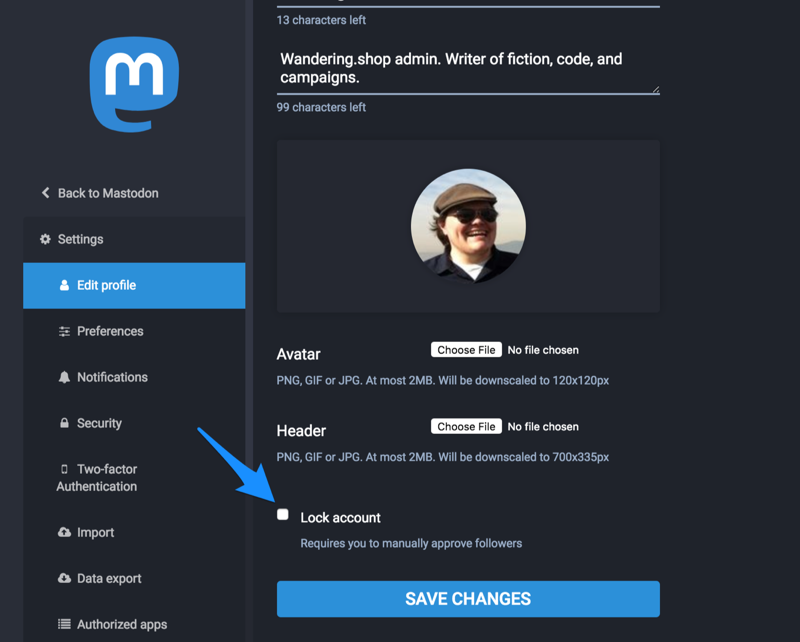

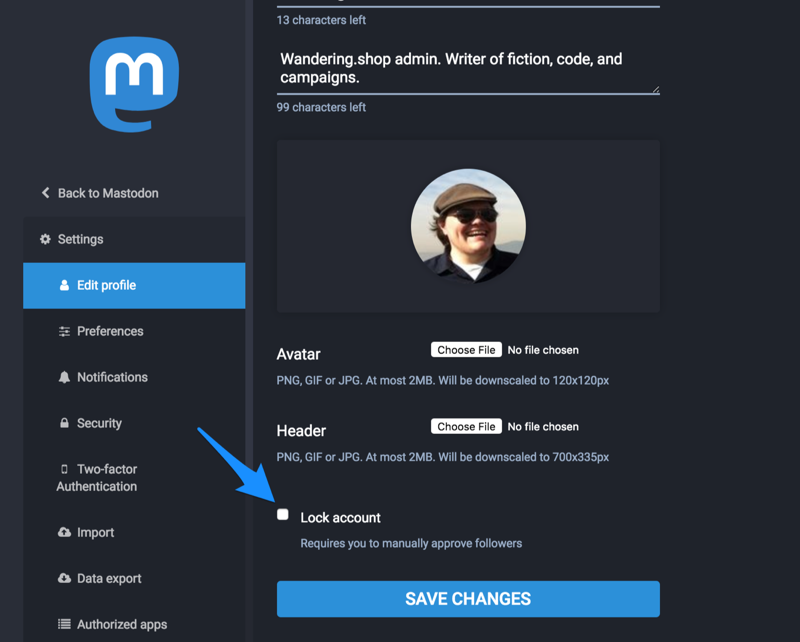

2. Lock your account

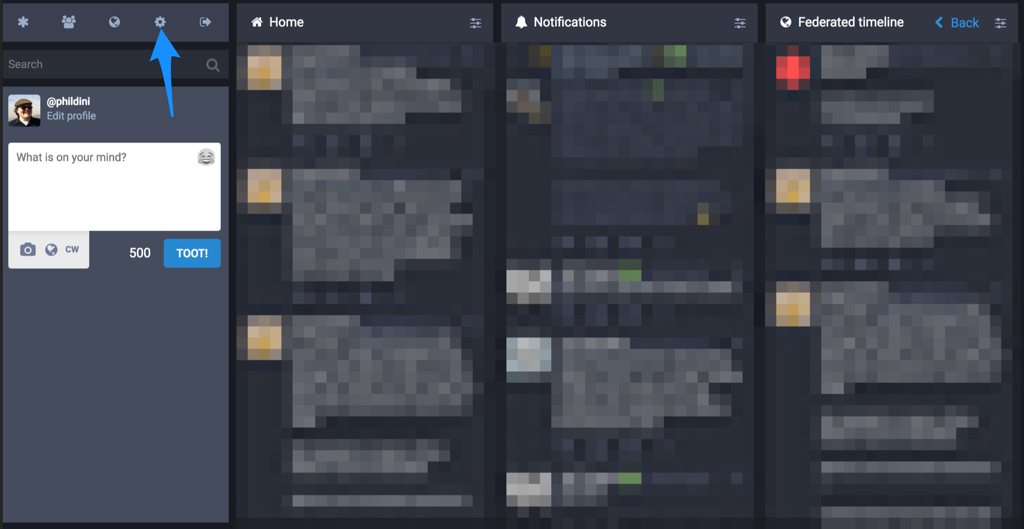

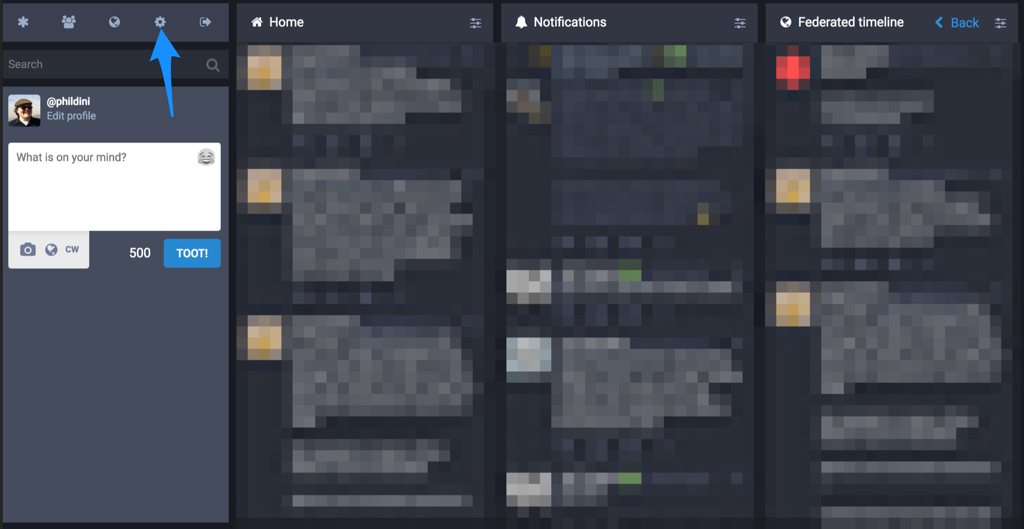

The next steps in this guide are going to be found in your Mastodon preferences, which you can find under the “Gear” tab in the Mastodon web interface. This guide, and all the screenshots, assume your server is on Mastodon 2.0, which many servers have moved to by this point.

In Mastodon, locking your account means that you must manually approve every follower. The Mastodon default is anyone can follow anyone else, without approval. Setting this setting will require action from you every time someone wants to follow you, but it also means no-one can follow you without your permission. This is especially important if you want to…

3-4. Set privacy defaults on toots and unlist from search results

The default for toots that you post in Mastodon is “Public”, meaning everyone can see them and re-toot them. The next level of privacy is “Unlisted”, meaning anyone can see them if they go looking for them, or if they follow you, but they won’t show up on the public timelines, like the “Local” feed or the “Federated” feed. The final level of non-direct-message privacy is “Followers-only”. When a toot is followers-only, only your followers can see it, they CANNOT re-toot it, and it won’t show up in any public feeds.

All of these options are available on a per-toot basis in every client I’ve seen, but if you’d like your toots to be more restricted by default, you can change that here. However you are most comfortable using Mastodon is the right way to use Mastodon, but it’s worth noting that interesting toots in the public timelines is how people find other interesting people on Mastodon, and removing your toots from that by default may limit how many people get to appreciate what you have to offer.

On this same preference page is “Opt out of search engine indexing” option, which will translate to your public profile and status pages not being crawled by search engines that respect things like robots.txt files.

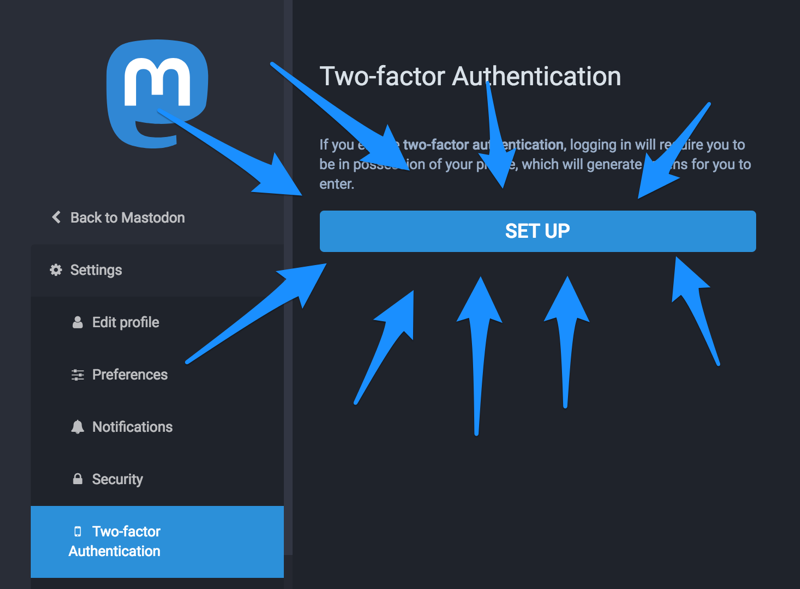

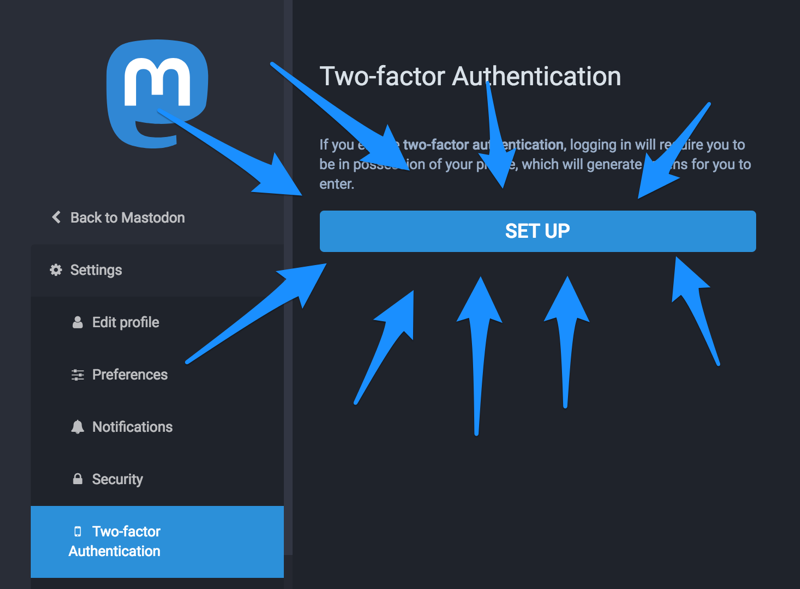

5. Set up 2FA for your account

This falls under “Good internet hygiene”, but it’s a good idea to set up two-factor authentication for your account, and Mastodon has made it easy to do so. Accounts getting hacked sucks, turning on 2FA makes that less likely.

6. Donate to Mastodon development and encourage more privacy features

Mastodon is created and run by volunteers, and you can help support the lead developer through the Mastodon Patreon Page. Additionally, suggestions for more privacy features come up all the time in the Mastodon Github, and you can help make them a reality by pitching in your time and expertise.

open-source

tech

wandering-shop

security

privacy

June 17, 2017

Finding Your Tribe or: Why You Should Join Me at DjangoCon

“If you’re a programmer you should attend technical conferences to further your career.” Some variation of this was said to me so often when I was starting out as a writer of software that it became something like gospel. It became how I approached conferences; I was there to gain skills or a network that would help me further my career in some way, or further the interests of whoever my employer happened to be at the time.

If you approach conferences with this mindset, I think you will be disappointed. I certainly was. And it took a couple years of going to conferences before I realized (with the help of my wife and some close friends, I should point out) that I had the most fun when I focused less on how any particular conference was going to further my career and focused more on making genuine connections with people, and focusing on topics I actually found exciting.

This makes sense to me when I step back to think about it. Writing software, even when you’re on a large project or part of a large team, can be a very lonely, isolating business. We spend most of our time in our own heads, building castles of imagination that we make real through code. Given the viral strains of imposter syndrome, burnout, and depression that runs through our industry, it can feel incredibly difficult to reach out and make connections, to share our problems and commiserate even with our closest peers.

This is the strength of the best conferences for me. Yes, you will learn things at a good technical conference. You will be exposed to ideas and approaches to problems (both technical and social) that you maybe hadn’t thought of before. Delighting in learning is a totally valid reason to attend technical conferences, and part of why I attend so many.

But the primary reason for me is finding and reconnecting with my tribe. Technical conferences, especially in the Python community, are filled with some of the best and brightest people I’ve had the fortune of knowing, and, more than that, are filled with people who are kind, and willing to listen, and also want to connect with others in their community. I will tell you a secret: Many of the best and brightest, those you might be coming to a conference specifically to see speak, are coming because they also want to make those connections. They also want to reach out, commiserate, and find their tribe.

Now let’s talk about DjangoCon, specifically DjangoCon US which is coming up in August. PyCon is the big conference in our community, and it draws the biggest crowds. PyCon is excellent, and I enjoy going every year. I connect with people at PyCon that I basically don’t see for the rest of the year. But where PyCon is the big yearly reunion with the whole community, and can therefore be overwhelming, DjangoCon is the smaller gathering with friends. Where PyCon is, in many ways, a week-long festival for the Python community, DjangoCon is closer to an intimate dinner party, where you can hear more of each other’s conversations, and join in some incredible discussions.

If you’re still searching for a tribe, or want to reconnect with the Python and Django Community, and want to do so in an intimate gathering of friends, I hope you’ll consider attending DjangoCon this year. As an added bonus, you’ll get to hear myself and the other speakers give a frankly incredible lineup of talks. Seriously, I get excited just looking at it.

Now, some people might be turned off by the fact that the conference is in Spokane. It’s a little out of the way, this is true, but this is one of the reasons I get excited about conferences: Chances to visit places I wouldn’t visit otherwise. I’ll also say that the best breakfast I ever had was in a small town in Washington, and I’m excited for the brunch game in Spokane.

If you’re still not sure that DjangoCon is where you’ll find your tribe, I direct you to the opening talk: “The Shy Person’s Guide to Tech Conferences”. DjangoCon is here for you, and we can’t wait to meet you.

Hope to see you in Spokane.

P.S. About that “technical conferences will further your career” thing. Nothing has done more for my career, and my well-being as human, as having a collection of real friends that I’ve met at conferences.

django

advice

python

pycon

djangocon

June 13, 2017

Introducing Epithet

There are many challenges to running an Open Source organization, but the one that I have personally felt the pain of again and again is that our tooling is awful. Github (and realistically we’re all using Github at this point) still feels in many ways like a tool designed around the idea that all the action is going to happen in one repo. This may not be entirely the fault of Github. Git itself is very tightly coupled to the idea that anything you care about for a particular action is going to happen in one, and only one, repository.

When Github released Organizations, the world rejoiced, because we could now map permissions and team members in our source repository the way they were mapped in the real world. Every new feature Github adds to its Organizations product causes more rejoicing, because so many teams work across multiple repos, and the tooling around multiple repos is still awful.

The awfulness of this tooling is probably a strong factor in the current trend towards “microservice, monorepo” code organization, but that’s another post.

I’ve been the equivalent of a core contributor for a half dozen Github organizations, and I’ve noticed that one area where the tooling is especially lacking is around labels. I’ve seen labels used to designate team or individual ownership, indicate the status of pull requests, signal that certain issues are friendly for beginners, and even used as deploy targets for chunks of code. It’s fair to say that labels form a core tool in the infrastructure of every team I’ve seen using Github, and yet the tooling Github exposes for labels is painfully lacking.

I could go on and on about this, but my goal here isn’t to necessarily make Github feel bad. I hope they’re working on better label tooling, and if they want ideas, boy am I willing to give them. But there is one label-specific wall I kept banging my head against, and that is label consistency across all the repos of an Organization.

Some of you read that and feel remembered pain. I feel that pain with you, and we are here for each other. Some of you might have no idea what I’m talking about, so I’ll explain a bit more.

Let’s say you want to add a “beginner-friendly” label to all the repos in your Open Source Organization, so that new contributors can find issues to start with. Right now on Github, you would need to go into every repo, click into the Issues page, click into the Labels tab, and manually create that label. There are no “Org-wide labels”, and no tool for easily creating and updating labels across all the repos of an organization.

Until now.

Introducing Epithet, a Python-based command line tool for managing labels across an organization. You give it a Github key, organization, and label name, and it will make sure that label exists across all the repos in your org. Give it a color, and it’ll make the color of that label consistent across all repos as well. Have you decided you’re done with a particular label? Epithet can delete it from all your repos for you. Are you using Github Enterprise? Epithet supports that too.

Epithet exists to fill a very particular need in open (and closed) source Github organizations, and it’s still pretty alpha. We use it for the BeeWare project, and it might be used soon for syncing labels in the Ragtag organization. You can start using it today by checking out the (sadly small) documentation, and if there’s a feature missing you’d like to see, I’m happy to work with you on getting a PR submitted.

Managing Open Source organizations is hard. My hope is Epithet makes it a little bit easier.

WordFugue is independent, and we will never run traditional ads. If you like what we’re doing, consider donating to phildini’s Patreon, or buy a book from our affiliate store. This week we’re reading Patrick Rothfuss’ “The Name of the Wind”.

Special thanks to Katie Cunningham and Kenneth Love for reviewing this post.

projects

python

open-source

git

June 6, 2017

Only We Can Save Pythonkind

Python is the best technical community I’ve seen, and close to the best community I’ve seen at this scale. If you’ve been programming for any length of time, you’ve seen technologies and frameworks and languages rise and fall. We often bemoan the loss of certain ideas from these fallen works, but rarely talk about the communities that fell with them. Python is in many ways the most deliberate community that I’ve ever seen around a technology, and my life will be worse if it ever falls.

I think the Python Community is either near an inflection point, or right on top of one. What do I mean by that? I mean that, over the next five to ten years, I see two paths for the Python community and ecosystem. (Because “Python community and ecosystem” is long to type and read, I’m going to use “Python” to mean “the Python community and ecosystem” for the rest of this post.)

Path one, the one I hope we take, is the one where we take active steps to grow Python. It means that we are continuing to welcome new people into the community, from areas we never considered. It means we have a surplus of good, well-paying jobs for Pythonistas at every experience level. It means the companies and organizations creating those jobs recognize what Python gives them, and sponsors the ecosystem and community events to be better than ever.

Path two is the path I’m worried about. It’s the path where we expect Python to take care of itself, where we collectively take a more passive approach to the community that so many of us enjoy, and which has given much to many of us. I think this path results not in Python dying overnight, but in a slow decrease in Python, in Python becoming more and more irrelevant over time. It results in less Python jobs, more Go or Node or “insert language here” jobs. It results in Python being pigeonholed into certain industries, and new Pythonistas being forced to learn some other language to start their career. It results in our major events slowly shrinking over time, and a time where we start counting down attendees instead of counting up.

I’m not going to try too hard to convince you that this is where we are, that we are at or close to a fork in the road. It’s what I believe, and I think you some of you might agree already, but here’s some of the things I’ve noticed that make me think we’re close to such a point.

- PyCon 2017 was fantastic, and had more attendees than ever, but had noticeably fewer booths in the expo hall then last year, and I believe fewer sponsors overall.

- Other Python and Django conferences, especially the smaller regional conferences, are finding it harder and harder to get sponsors. Some of this is the market tightening, some of this is companies moving out of Python, or not feeling like they get a return on their investment.

- More programs and code schools are using Python as their teaching language, but for many the entry-level positions just aren’t there. Some of this is, again, the market not hiring entry-level, some of this is the companies we work for being willing to take risks and train.

Based on the above, and some other feelings and anecdotes, I think we’re right on top of the fork in the road. So what do we do about it? We take deliberate actions to help grow Python. Here’s what I’m planning to do over the next year:

- Running for the PSF Board of Directors. Why do I think being on the Board is important in the context of this post? Because I can push for growth at the Python organization level, and I can get things done as a Board member that I can’t get done as a non-Board member of the PSF. Anyone reading this can, and should, run for the Board if they feel so inclined. But I’d also love to see more participation in the PSF committees, especially along the lines of fundraising and outreach. No matter the outcome of the election, I’m going to continue my work on the Sponsorships committee, and keep doing the other things on this list.

- Reaching out to University Computer Science departments about using Python. I’m already in the process of arranging a guest lecture with classes in my old CS department about life as a professional Software Engineer. I’m planning to add specifics about how I use Python (which is more and more the introductory teaching language) in my professional life. My hope is I can help connect classroom lessons to professional Python just by showing up and giving a small talk.

- Reaching out to University Science departments about Python. If the keynotes at PyCon 2017 taught us anything, they taught us that Python is an incredible resource in research science departments, statistics departments, anywhere deep thinkers need to do computation and visualization. I’m hoping to put together a “Python in Science” roadshow to help with this, but the reality is Software Carpentry is years ahead of me in making this happen, and anything we can to do help with them is almost certainly worthwhile.

- Being a Core Contributor to the BeeWare project. Python has great stories around developing web applications, working in the sciences, and doing systems tasks. Our stories around developing consumer apps are lacking, and I don’t think they need to be. BeeWare, and many others, are taking a stab at filling this gap, but for you reading this the action item could be “find a Python project in an area you care about, and work at making it the best it can be.”

- Volunteering time to get more companies and projects started in Python. This one is more nebulous, and I haven’t done it yet but plan to soon. I’m planning to reach out to VCs and incubators and especially hackathons and say “Here’s my background, I’m happy to show up to any event and donate my time to help, but I’m only going to help with Python.” I don’t know how this is going to go over, but this idea has some exciting potential. If we want more jobs in Python, we need to be pushing for more companies and projects to use Python, right from the beginning.

If any of these ideas seem interesting to you, feel free to copy them! If they seem interesting but daunting, feel free to reach out to me ([email protected]) to chat about them. If these ideas inspired your own ideas in a different direction, great! Tell me about what you’re doing and I’ll share it far and wide. My goal in listing these ideas isn’t to toot my own horn, but start a conversation about methods for Python outreach, in the hope of growing Python.

Of course, I could be wrong in my beliefs. (I’d actually love to be corrected with stories or data that show I’m wrong, and would happily share them here.) What if Python is healthy, and is going to grow consistently over the next decade?

Then I’d still do everything I’m planning to do, and encourage others to do the same. I think everything we pour into the Python community is valuable, and any new Pythonista we bring in enriches us all in ways we can’t possibly anticipate.

If I’m wrong, and we make Python better for no reason, we’ll still have a better Python.

python

open-source

psf

beeware

May 25, 2017

I’m entering the arena for the third year. I’m running to be a Director for the Python Software Foundation. This post will help explain why.

There’s an argument that anything I write here should instead be in my candidate statement. I don’t disagree, and the reason I’m writing here instead of there relates to one of the things I’d like to change: Nominating for the Board of Directors requires editing the Python Wiki. The Python Wiki is hard to use, the documentation on it is not well-exposed, and a room full of Pythonistas during the PyCon sprints (including one current Board member) couldn’t tell me who maintains it. Beyond that, you have to answer somewhat-esoteric Python trivia to submit your edits.

I’d like a clear definition of what the Board and the community thinks the Wiki is for, and regular check-ins on whether it’s serving the community well. I’d like to see us running “PSF Sprints”, where Board members or anyone else interested is writing documentation about how the PSF is run. Our election processes and funding processes and budget processes and outreach processes should be checked on regularly, and I’ll be pushing for more transparency and openness about how we run the business side of Python.

Speaking of outreach, I’d like the PSF to be doing more of it, and funding groups who are growing the Python community. There will be more about this in a future post, because I have plans on how to get our community of Pythonistas back out into the world growing the community at universities and hackathons and incubators and corporations. I want every group and individual trying to grow Python to know that the PSF has their back, and will put money behind them.

I also want to be on the Board to remind the PSF that they have power beyond grant giving. Yes, the majority of what the PSF Board has done in recent years has been giving grants to organizations around the world. That work is excellent, and I want to see it increase. But the PSF is also in a unique position to be a promotion clearing house and force multiplier for good ideas in the community. When good learning materials are written, they should be easily findable from the official Python websites. When Python events are being held, the PSF should be a cheerleader, spreading the word about what’s happening in the community.

These are the things I plan to do as a PSF Director to help grow Python. I haven’t even gotten into the investment I want to see us putting into our core tools and platform infrastructure; that will have to be another post and my brain is a little fried from PyCon.

So the only question left is: Why do I need to be on the Board to do these things? And the answer is I don’t These are things I’m going to push for no matter what. But the PSF is in many ways the voice of the community, and I want to see that voice brought to bear on the issues that will be affecting our community for the next year and the next decade. I think I can help use that voice to speak for the Pythonistas of the future, and I hope you agree.

python

psf

pycon